Note that, it is possible that Flume can have more than one agent. Afterward, it forwards it to its next destination that is sink or agent. At first, it receives events from clients or other agents. Flume Agents However, in Apache Flume, an independent daemon process (JVM) is what we call an agent.Basically, that can be transported from the source to the destination accompanied by optional headers. Generally, it contains a payload of the byte array. Flume Events The basic unit of the data which is transported inside Flume is what we call an Event.Let’s now talk about each of the components present in the Flume architecture: Then, it is pushed to a centralized store, i.e., HDFS.

The data collector is another agent that collects data from various other agents that are aggregated. This data that has been generated gets collected by Flume agents. In Flume architecture, there are data generators that generate data. Refer to the image below for understanding the Flume architecture better.

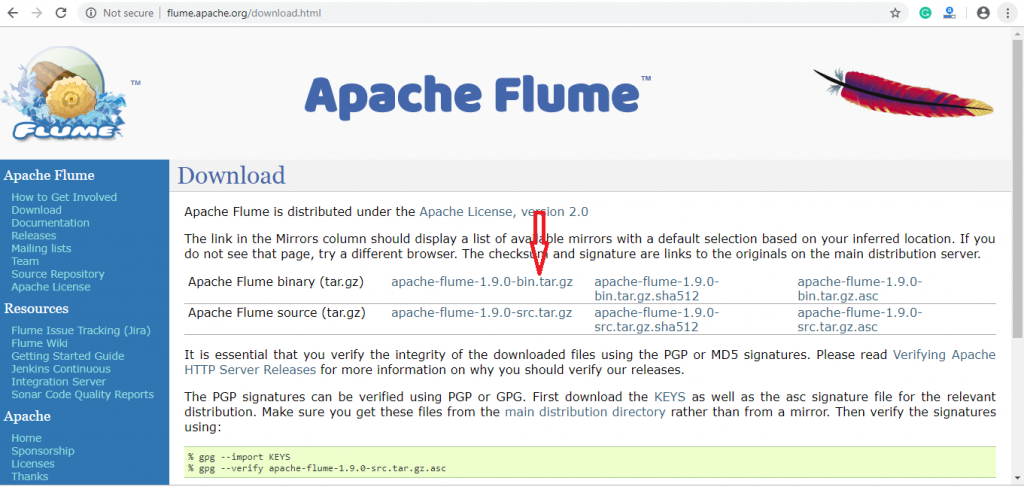

It is somewhat similar to a Unix command, ‘tail’. ‘Tail’: The data is piped from the local files and is written into the HDFS via Flume.Apache Flume is a unique tool designed to copy log data or streaming data from various different web servers to HDFS.Īpache Flume supports several sources as follows: from several sources to one central data store. Apache Flume is basically a tool or a data ingestion mechanism responsible for collecting and transporting huge amounts of data such as events, log files, etc.